Prowler 4.0.0 – The Trooper

You’ll take my life, but I’ll take yours too

You’ll fire your musket, but I’ll run you through

So when you’re waiting for the next attack

You’d better stand, there’s no turning back

When I started Prowler almost eight years ago, I thought about calling it The Trooper (thetrooper as in the command line sounds good but I thought prowler was even better). I can say today, with no doubt that this version 4.0 of Prowler, The Trooper, is by far the software that I always wanted to release. Now, as a company, with a whole team dedicated to Prowler (Open Source and SaaS), this is even more exciting. With standard support for AWS, Azure, GCP and also Kubernetes, with all new features, this is the beginning of a new era where Open Cloud Security makes an step forward and we say: hey WE ARE HERE FOR REAL and when you’re waiting for the next attack, you’d better stand, there’s no turning back

Enjoy Prowler – The Trooooooooper! 🤘🏽🔥 song!

Breaking Changes

- Allowlist now is called Mutelist

- Deprecate the AWS flag

--sts-endpoint-regionsince we use AWS STS regional tokens. - The

--quietoption has been deprecated, now use the--statusflag to select the finding’s status you want to get fromPASS,FAILorMANUAL. - To send only FAILS to AWS Security Hub, now use either

--send-sh-only-failsor--security-hub --status FAIL - All

INFOfinding’s status has changedMANUAL.

We have deprecated some of our outputs formats:

- The HTML is replaced for the new Prowler Dashboard (

prowler dashboard) - The JSON is replaced for the JSON OCSF v1.1.0

New features to highlight in this version

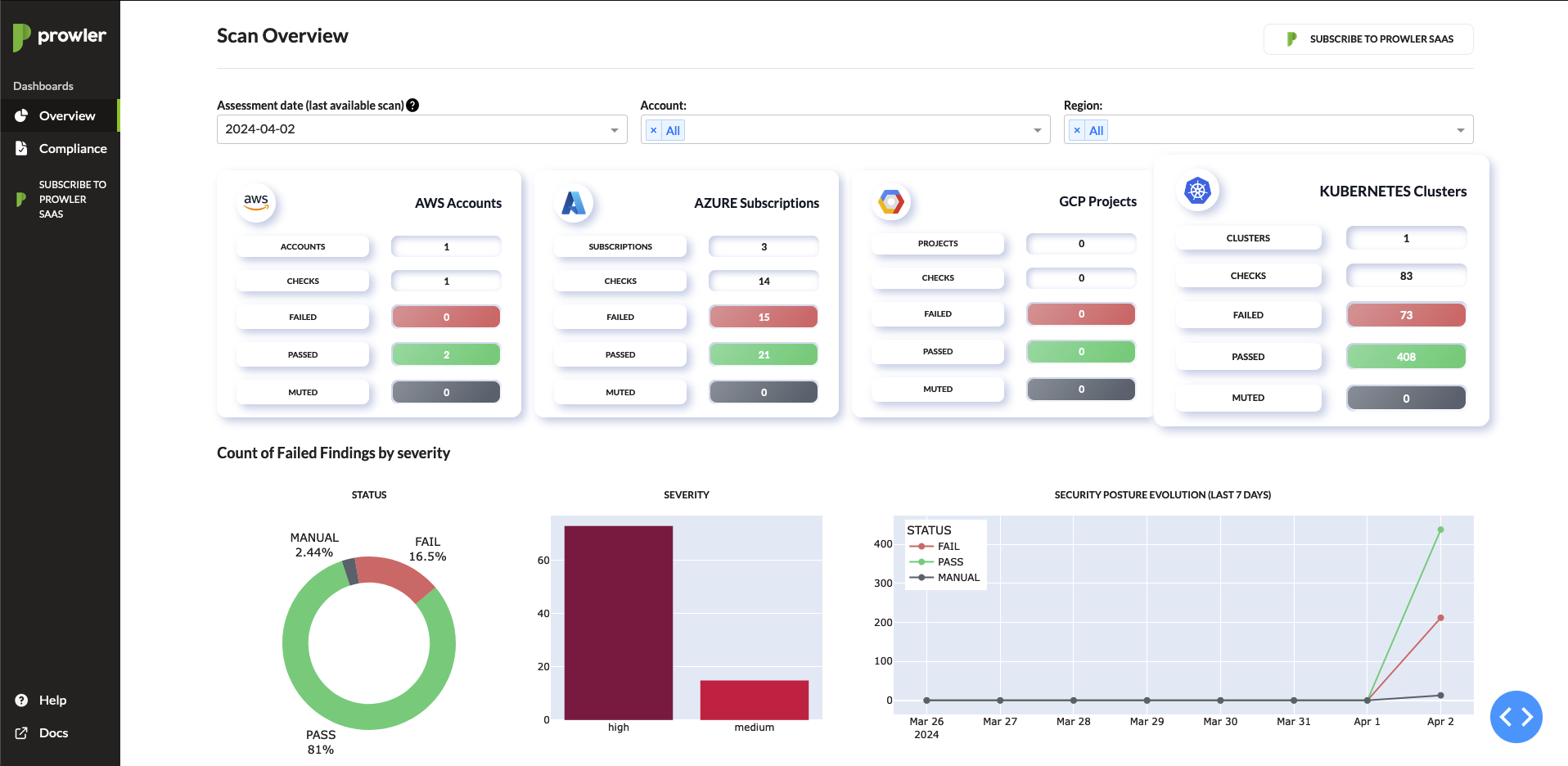

Dashboard

- Prowler has local dashboard to play with gathered data easier. Run

prowler dashboardand enjoy overview data and compliance.

🎛️ New Kubernetes provider

- Prowler has a new Kubernetes provider to improve the security posture of your clusters! Try it now with

prowler kubernetes --kubeconfig-file <kube.yaml> - CIS Benchmark 1.8 for K8s is included.

📄 Compliance

- All compliance frameworks are executed by default and stored in a new location:

output/compliance

AWS

- The AWS provider execution by default does not scan unused services, you can enable it with

--scan-unused-services. - 2 new checks to detect possible threads, try it now with

prowler aws --category threat-detectionfor Enumeration and Privilege Escalation type of activities.

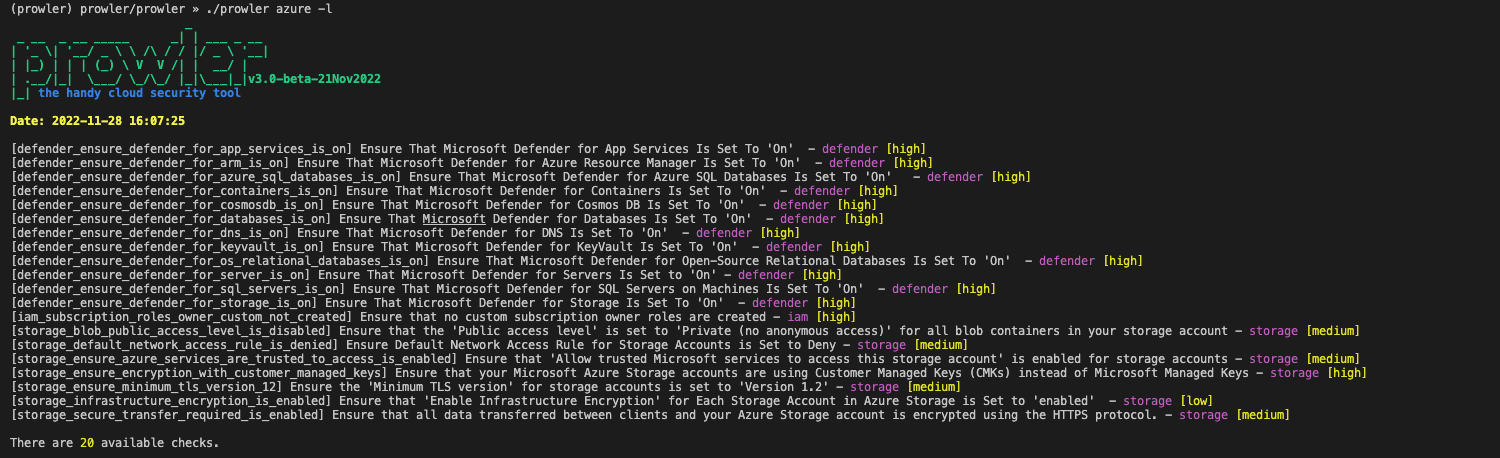

🗺️ Azure

- All Azure findings includes the location!

- CIS Benchmark for Azure 2.0 and 2.1 is included.

🔇 Mutelist

- The renamed mutelist feature is available for all the providers.

- In AWS a default allowlist is included in the execution.

🌐 Outputs

- Prowler now the outputs in a common format for all the providers.

- The only JSON output now follows the OCSF Schema v1.1.0

💻 Providers

- We have unified the way of including new providers for easier development and to add new ones.

🔨 Fixer

- We have included a new argument

--fixto allow you to remediate findings. You can list all the available fixers withprowler aws --list-fixers

Prowler v3 – Piece of Mind

Today we are releasing a new major version of Prowler 🎉🥳🎊🍾, the Version 3 aka Piece of Mind.

Take Prowler v3 as our 🎄Christmas gift 🎁 for the Cloud Security Community.

Artwork property of Iron Maiden

Piece of Mind was the fourth studio album of Iron Maiden. Its meaning fits perfectly with what we do with Prowler in both senses: being protected and at the same time, this is the software I would have wanted to write when I started Prowler back in 2016 (this is now, more than ever, a piece of my mind). Now this has been possible thanks to my awesome team at Verica.

No doubt that 2022 has been a pretty interesting year for us, we launched ProwlerPro and released many minor versions of Prowler. Now enjoy Sun and Steel while you keep reading these release notes.

If you are an Iron Maiden fan as I am, you have noticed the latest minor release of Prowler (2.12) was a song from this very same album, just a clue of what was coming! In Piece of Mind you can find one of the most popular heavy metal songs of all times, The Trooper, which will be a Prowler version to be released during 2023.

Prowler v3 is more than a new version of Prowler, it is a whole new piece of software, we have fully rewritten it in Python and we have made it multi-cloud adding Azure as our second supported Cloud Provider. Prowler v3 is also way faster, being able to scan an entire AWS account across all regions 37 times faster than before, yes! you read it correctly, what before took hours now it takes literally few minutes or even seconds.

New documentation site:

We are also releasing today our brand new documentation site for Prowler at https://docs.prowler.cloud and it is also stored in the docs folder in the repo.

What’s Changed:

Here is a list of the most important changes in Prowler v3:

- 🐍 Python: we got rid of all bash and it is now all in Python.

pip install prowlerthen runprowlerthat’s all. - 🚀 Faster: huge performance improvements.

Scanning the same account takes from 2.5 hours to 4 minutes. - 💻 Developers and Community: we have made it easier to contribute with new checks and new compliance frameworks. We also included unit tests and native logging features. And now the CLI supports long arguments and options.

- ☁️ Multi-cloud: in addition to AWS, we have added Azure.

- ✅ Checks and Groups: all checks are now more comprehensive and we provide resolution actions in most of them. Their ID is no longer tight to CIS but they are self-explanatory. Groups now are dynamically generated based on checks metadata like services, categories, severity and more).

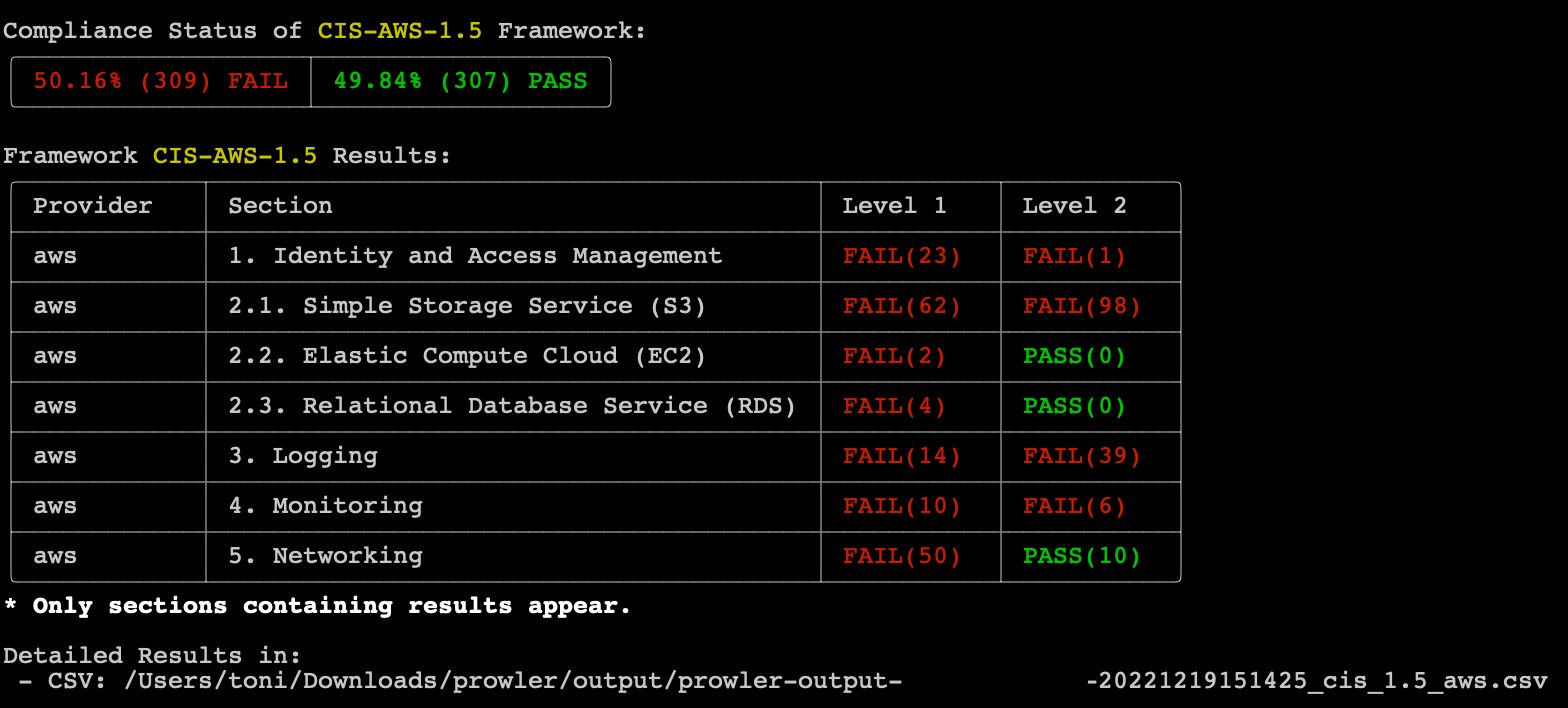

- ⚖️ Compliance: we are including full support for CIS 1.4, CIS 1.5 and the new Spanish ENS in this release, more to come soon! Compliance also has its own output file with their own metadata and to create your own is easier than ever before making more comprehensive reports.

- 🧩 Compatibility with v2: most of the options are the same in this version in order to support backward compatibility however some options like assume role or AWS Organizations query are now different and easier to use.

- 🔄 Consolidated output formats: now both CSV and JSON reports come with the same attributes and compared to v2, they come with more than 40 values per finding. HTML, CSV and JSON are created every time you run

prowler. - 📊 Quick Inventory: introduced in v2, we have fine tuned the Quick Inventory feature and now you can get a list of all resources in your AWS accounts within seconds.

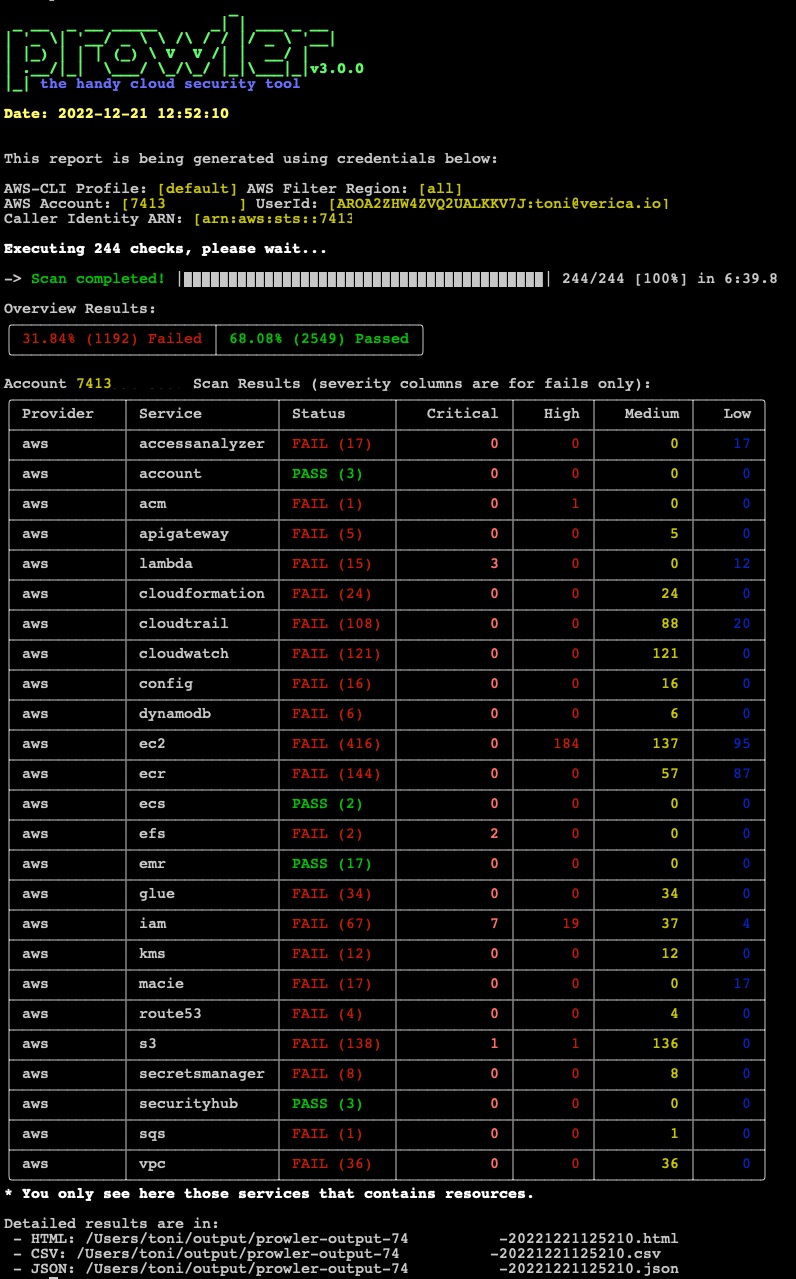

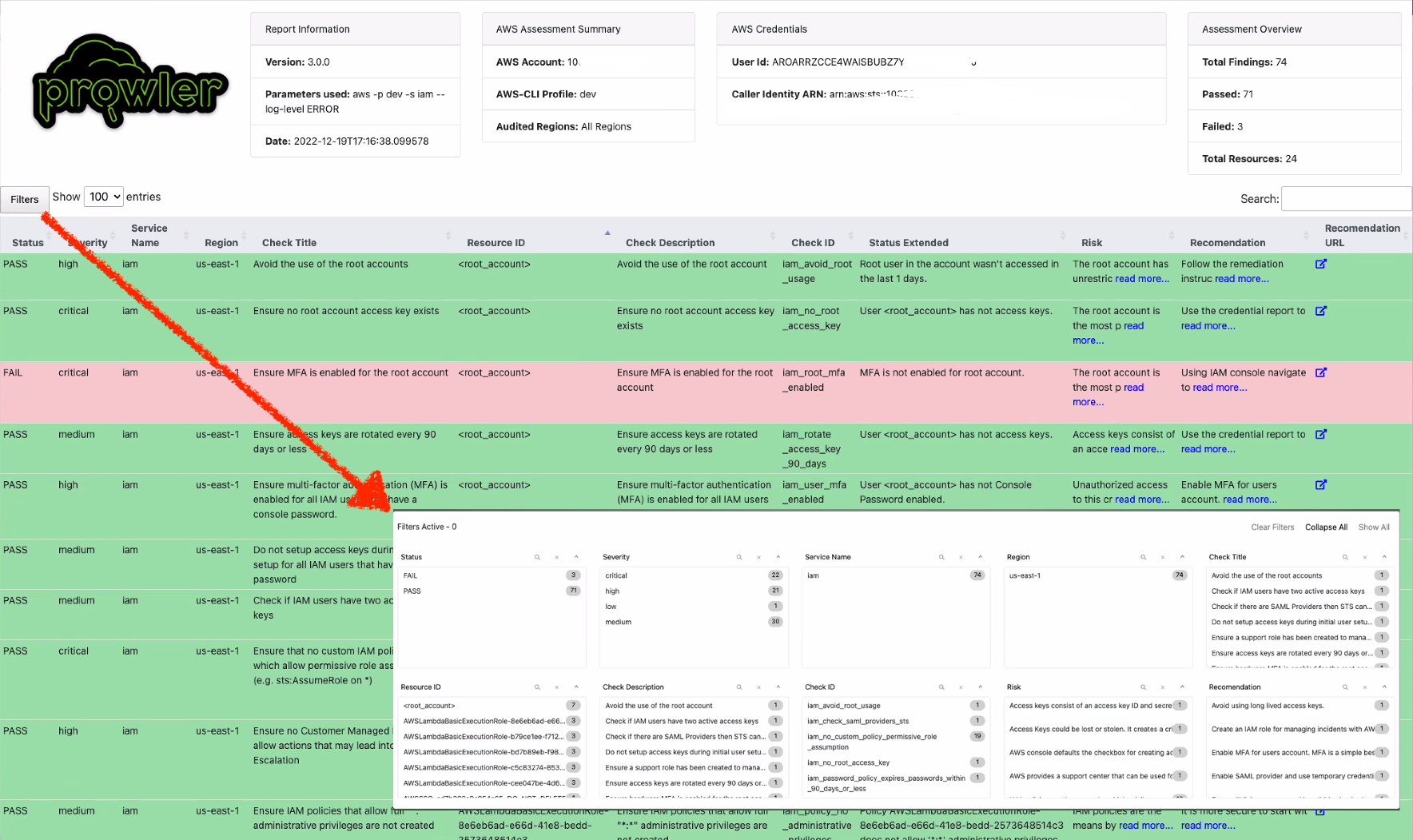

Prowler new default overview:

Prowler updated HTML report:

Prowler compliance overview:

Prowler list of Azure checks:

What is coming next?

- More Cloud Providers and more checks: in addition to keep adding new checks to AWS and Azure, we plan to include GCP and OCI soon, let us know if you want to contribute!

- XML-JUNIT support: we didn’t add that to v3, if you miss it, let us know in https://github.com/prowler-cloud/prowler/discussions

- Compliance: we will add more compliance frameworks to have as many as in Prowler v2, we appreciate help though!

- Tags based audit: you will be able to scan only those resources with specific tags.

Prowler Pro and Verica Announcement

Hi there!

I’m so happy to announce that I’ve joined Verica and thanks to their support we have invested a lot on Prowler and we are announcing today the availability of Prowler Pro!

As many of you have noticed, Prowler is growing fast and getting better. Now we are 4 full time engineers. Pepe Fagoaga, Nacho Rivera and Sergio Garcia are the Prowler Pro dream team along with a vibrant community. We are working every day on Prowler to make it better and more comprehensive, with that we are also launching Prowler Pro.

Our main goal is to keep hands on the Open Source version and giving customers better experience at enterprise level with Prowler Pro.

Prowler 2.8.0 – Ides of March

The Ides of March is an instrumental song that opens the second studio album of Iron Maiden called Killers. This song is great as an opening, March is the month when spring starts in my side of the world, is always time for optimism. Ides of March also means 15 of March in the Roman calendar (and the day of the assassination of Julius Caesar). Enjoy the song here.

We have put our best to make this release and with important help of the Prowler community of cloud security engineers around the world, thank you all! Special thanks to the Prowler full time engineers @jfagoagas, @n4ch04 and @sergargar! (and Bruce, my dog) ❤️

Important changes in this version (read this!):

Now, if you have AWS Organizations and are scanning multiple accounts using the assume role functionality, Prowler can get your account details like Account Name, Email, ARN, Organization ID and Tags and add them to CSV and JSON output formats. More information and usage here.

New Features

- 1 New check for S3 buckets have ACLs enabled by @jeffmaley in #1023 :

7.172 [extra7172] Check if S3 buckets have ACLs enabled - s3 [Medium] - feat(metadata): Include account metadata in Prowler assessments by @toniblyx in #1049

Enhancements

- Add whitelist examples for Control Tower resources by @lorchda in #1013

- Skip packages with broken dependencies when upgrading system by @dlorch in #1009

- Docs: Improve check_sample examples, add general comments by @lazize in #1039

- Added timestamp to temp folders for secrets related checks by @sectoramen in #1041

- Make python3 default in Dockerfile by @sectoramen in #1043

- Docs(readme): Fix typo by @jfagoagas in #1072

Fixes

- Fix issue extra75 reports default SecurityGroups as unused #1001 by @jansepke in #1006

- Fix issue extra793 filtering out network LBs #1002 by @jansepke in #1007

- Fix formatting by @lorchda in #1012

- Fix docker references by @mike-stewart in #1018

- Fix(check32): filterName base64encoded to avoid space problems in filter names by @n4ch04 in #1020

- Fix: when prowler exits with a non-zero status, the remainder of the block is not executed by @lorchda in #1015

- Fix(extra7148): Error handling and include missing policy by @toniblyx in #1021

- Fix(extra760): Error handling by @lazize in #1025

- Fix(CODEOWNERS): Rename team by @jfagoagas in #1027

- Fix(include/outputs): Whitelist logic reformulated to exactly match input by @n4ch04 in #1029

- Fix CFN CodeBuild example by @mmuller88 in #1030

- Fix typo CodeBuild template by @dlorch in #1010

- Fix(extra736): Recover only Customer Managed KMS keys by @jfagoagas in #1036

- Fix(extra7141): Error handling and include missing policy by @lazize in #1024

- Fix(extra730): Handle invalid date formats checking ACM certificates by @jfagoagas in #1033

- Fix(check41/42): Added tcp protocol filter to query by @n4ch04 in #1035

- Fix(include/outputs):Rolling back whitelist checking to RE check by @n4ch04 in #1037

- Fix(extra758): Reduce API calls. Print correct instance state. by @lazize in #1057

- Fix: extra7167 Advanced Shield and CloudFront bug parsing None output without distributions by @NMuee in #1062

- Fix(extra776): Handle image tag commas and json output by @jfagoagas in #1063

- Fix(whitelist): Whitelist logic reformulated again by @n4ch04 in #1061

- Fix: Change lower case from bash variable expansion to tr by @lazize in #1064

- Fix(check_extra7161): fixed check title by @n4ch04 in #1068

- Fix(extra760): Improve error handling by @lazize in #1055

- Fix(check122): Error when policy name contains commas by @plarso in #1067

- Fix: Remove automatic PR labels by @jfagoagas in #1044

- Fix(ES): Improve AWS CLI query and add error handling for ElasticSearch/OpenSearch checks by @lazize in #1032

- Fix(extra771): jq fail when policy action is an array by @lazize in #1031

- Fix(extra765/776): Add right region to CSV if access is denied by @roman-mueller in #1045

- Fix: extra7167 Advanced Shield and CloudFront bug parsing None output without distributions by @NMuee in #1053

- Fix(filter-region): Support comma separated regions by @thetemplateblog in #1071

New Contributors

- @jansepke made their first contribution in #1006

- @lorchda made their first contribution in #1012

- @mike-stewart made their first contribution in #1018

- @n4ch04 made their first contribution in #1020

- @jeffmaley made their first contribution in #1023

- @roman-mueller made their first contribution in #1045

- @NMuee made their first contribution in #1053

- @plarso made their first contribution in #1067

- @thetemplateblog made their first contribution in #1071

- @sergargar made their first contribution in #1073

Full Changelog: 2.7.0…2.8.0

Prowler 2.7.0 – Brave

This release name is in honor of Brave New World, a great song of 🔥Iron Maiden🔥 from their Brave New World album. Dedicated to all of you looking forward to having the world we had before COVID… We hope is not hitting you bad. Enjoy the rest of the note below.

Important changes in this version (read this!):

- As you can see, Prowler is now in a new organization called https://github.com/prowler-cloud/.

- When Prowler doesn’t have permissions to check a resources or service it gives an INFO instead of FAIL. We have improved all checks error handling in those use cases when the CLI responds with a AccessDenied, UnauthorizedOperation or AuthorizationError.

- From this version,

masterbranch will be the latest available code and we will keep the stable code as each release, if you are installing or deploying Prowler usinggit cloneto master take that into account and use the latest release instead, i.e.:git clone --branch 2.7 https://github.com/prowler-cloud/prowlerorcurl https://github.com/toniblyx/prowler/archive/refs/tags/2.7.0.tar.gz -o prowler-2.7.0.tar.gz - For known issues please see https://github.com/prowler-cloud/prowler/issues the ones open with

bugas a red tag. - Discussions is now open in the Prowler repo https://github.com/prowler-cloud/prowler/discussions, feel free to use it if that works for you better than the current Discord server.

- 11 new checks!! Thanks to @michael-dickinson-sainsburys, @jonloza, @rustic, @Obiakara, @Daniel-Peladeau, @maisenhe, @7thseraph. Now there have a total of 218 checks. See below for details.

- An issue with Security Hub integration when resolving closed findings are either a lot of new findings, or a lot of resolved findings is now working as expected thanks to @Kirizan

- When credential are in environment variable it failed to review, that was fixed by @lazize

- See below new features and more details for this version.

New Features

- 11 New checks for Redshift, EFS, CloudWatch, Secrets Manager, DynamoDB and Shield Advanced:

7.160 [extra7160] Check if Redshift has automatic upgrades enabled - redshift [Medium]

7.161 [extra7161] Check if EFS have protects sensative data with encryption at rest - efs [Medium]

7.162 [extra7162] Check if CloudWatch Log Groups have a retention policy of 365 days - cloudwatch [Medium]

7.163 [extra7163] Check if Secrets Manager key rotation is enabled - secretsmanager [Medium]

7.164 [extra7164] Check if CloudWatch log groups are protected by AWS KMS - logs [Medium]

7.165 [extra7165] Check if DynamoDB: DAX Clusters are encrypted at rest - dynamodb [Medium]

7.166 [extra7166] Check if Elastic IP addresses with associations are protected by AWS Shield Advanced - shield [Medium]

7.167 [extra7167] Check if Cloudfront distributions are protected by AWS Shield Advanced - shield [Medium]

7.168 [extra7168] Check if Route53 hosted zones are protected by AWS Shield Advanced - shield [Medium]

7.169 [extra7169] Check if global accelerators are protected by AWS Shield Advanced - shield [Medium]

7.170 [extra7170] Check if internet-facing application load balancers are protected by AWS Shield Advanced - shield [Medium]

7.171 [extra7171] Check if classic load balancers are protected by AWS Shield Advanced - shield [Medium]

- Add

-Doption to copy to S3 with the initial AWS credentials instead of the assumed as with-Boption by @sectoramen in #974 - Add new functions to backup and restore initial AWS credentials, for better handling chaining role by @sectoramen in #978

- Add additional action permissions for Glue and Shield Advanced checks by @lazize in #995

Enhancements

- Update Dockerfile to use Amazon Linux container image by @Kirizan in #972

- Update Readme:

-Toption is not mandatory by @jfagoagas in #944 - Add $PROFILE_OPT to CopyToS3 commands by @sectoramen in #976

- Remove unneeded package “file” from Dockerfile by @sectoramen in #977

- Update docs (templates): Improve bug template with more info by @jfagoagas in #982

Fixes

- Fix in README and multiaccount serverless deployment templates by @dlorch in #939

- Fix assume-role: check if

-Tand-Aoptions are set together by @jfagoagas in #945 - Fix

group25FTR by @lopmoris in #948 - Fix in README link for

group25FTR by @lopmoris in #949 - Fix issue #938 assume_role multiple times by @halfluke in #951

- Fix and clean assume-role to better handle AWS STS CLI errors by @jfagoagas in #946

- Fix issue with Security Hub integration when resolving closed findings are either a lot of new findings, or a lot of resolved findings by @Kirizan in #953

- Fix broken link in README.md by @rtcms in #966

- Fix checks with comma issues in checks by @j2clerck in #975

- Fix: Credential chaining from environment variables by @lazize in #996

New Contributors

- @jonloza made their first contribution in #932

- @Obiakara made their first contribution in #935

- @dlorch made their first contribution in #939

- @Daniel-Peladeau made their first contribution in #937

- @lopmoris made their first contribution in #948

- @halfluke made their first contribution in #951

- @maisenhe made their first contribution in #956

- @rtcms made their first contribution in #966

- @sectoramen made their first contribution in #974

- @j2clerck made their first contribution in #975

- @lazize made their first contribution in #995

Full Changelog: 2.6.1…2.7

Prowler 2.6.0 – Phantom

This release name is in honor of Phantom of the Opera, one of my favorite songs and a master piece of 🔥Iron Maiden🔥. It starts by “I’ve been lookin’ so long for you now” like looking for security issues, isn’t it? 🤘🏼 Enjoy it here while reading the rest of this note.

Important changes in this version:

- CIS level parameter (ITEM_LEVEL) has been reverted to the csv, json and html outputs (it was removed in 2.5), CIS Scored is not added since it is not relevant in the global Prowler reports. dd398a9

- Security Hub integration has been fixed due to a conflict with duplicated findings in the management account by @xeroxnir

- 12 New checks!! Thanks to @kbgoll05, @qumei, @georgie969, @ShubhamShah11, @jarrettandrulis, @dsensibaugh, @ShubhamShah11, @ManuelUgarte, @tekdj7: Now there are a total of 207. See below for details.

- Known issues, please review https://github.com/toniblyx/prowler/issues?q=is%3Aissue+is%3Aopen+label%3Abug.

- Now there is a Discord server for Prowler available, check it out in README.md.

- There is a maintained Docker Hub repo for Prowler and AWS ECR public repo as well. See badges in README.md for details.

- See below new features for more details of new cool stuff in this version.

New Features:

- 12 New checks for efs, redshift, elb, dynamodb, route53, cloiudformation, elb and apigateway:

7.148 [extra7148] Check if EFS File systems have backup enabled - efs [Medium]

7.149 [extra7149] Check if Redshift Clusters have automated snapshots enabled - redshift [Medium]

7.150 [extra7150] Check if Elastic Load Balancers have deletion protection enabled - elb [Medium]

7.151 [extra7151] Check if DynamoDB tables point-in-time recovery (PITR) is enabled - dynamodb [Medium]

7.152 [extra7152] Enable Privacy Protection for for a Route53 Domain - route53 [Medium]

7.153 [extra7153] Enable Transfer Lock for a Route53 Domain - route53 [Medium]

7.154 [extra7154] Enable termination protection for Cloudformation Stacks - cloudformation [MEDIUM]

7.155 [extra7155] Check whether the Application Load Balancer is configured with defensive or strictest desync mitigation mode - elb [MEDIUM]

7.156 [extra7156] Checks if API Gateway V2 has Access Logging enabled - apigateway [Medium]

7.157 [extra7157] Check if API Gateway V2 has configured authorizers - apigateway [Medium]

7.158 [extra7158] Check if ELBV2 has listeners underneath - elb [Medium]

7.159 [extra7159] Check if ELB has listeners underneath - elb [Medium]

- New checks group FTR (AWS Foundational Technical Review) by @jfagoagas

- New feature added flags

Zto control if Prowler returns exit code 3 on a failed check by @Kirizan in #865 - New Prowler Terraform Kickstarter by @singergs

- New way to deploy Prowler at Organizational level with serverless by @bella-kwon

- New feature: adding the ability to provide a file for checks

-Cto be ran by @Kirizan in #891

Enhancements:

- Enhanced scoring when only INFO is detected

- Enhanced ignore archived findings in GuardDuty for check extra7139 by @chbiel in https://github.com/toniblyx/prowler

- /pull/851

- Updated prowler-codebuild-role name for CFN StackSets name length limit by @varunirv in #846

- Added feature to allow role ARN while using -R parameter by @mmuller88 in #860

- Updated documentation regarding a confusion with the

-qoption (issue #884) by @w0rmr1d3r in #890

Fixes:

- Fixed extra737 remove false positives due to policies with condition by @rinaudjaws in #849

- Fixed title, remediation and doc link for check extra768 by @w0rmr1d3r in #853

- Fixed typo in risk description for check29 by @kamiryo in #858

- Fixed bug in extra784 by @tayivan-sg in #856

- Fixed support policy arn in check120 by @hersh86 in #862

- Fixed typo and HTTP capitalisation in extra7142 by @acknosyn in #863

- Fixed Security Hub conflict with duplicated findings in the management account #711 by @xeroxnir in #873

- Fixed doc reference link in check23 @FallenAtticus by @FallenAtticus in #864

- Fixed duplicated region in textFail message for extra741 by @pablopagani in #880

- Updated parts from check7152 accidentally left in by @jarrettandrulis in #895

- Fix check extra734 about S3 buckets default encryption with StringNotEquals by @rustic in #896

- Fix Shodan typo in -h usage text by @jfagoagas in #899

- Fixed typo in README.md by @bevel-zgates in #908

New Contributors

- @varunirv made their first contribution in #846

- @rinaudjaws made their first contribution in #849

- @chbiel made their first contribution in #851

- @tayivan-sg made their first contribution in #856

- @bella-kwon made their first contribution in #857

- @mmuller88 made their first contribution in #860

- @hersh86 made their first contribution in #862

- @acknosyn made their first contribution in #863

- @FallenAtticus made their first contribution in #864

- @georgie969 made their first contribution in #866

- @ManuelUgarte made their first contribution in #869

- @jarrettandrulis made their first contribution in #875

- @ShubhamShah11 made their first contribution in #877

- @dsensibaugh made their first contribution in #889

- @rustic made their first contribution in #896

- @zqumei0 made their first contribution in #894

- @bevel-zgates made their first contribution in #908

Full Changelog: 2.5.0…2.6.0

Thank you all for your contributions, Prowler community is awesome! 🥳

Run Prowler from AWS CloudShell in seconds

Using AWS CloudShell is probably the easier an quicker way to run Prowler in your AWS account.

Just start AWS CloudShell and run these commands:

git clone https://github.com/toniblyx/prowler

pip3 install detect-secrets --user

cd prowler

./prowlerIf you run Prowler and realize that takes more time that the CloudShell session you can use screen command line tool for that (screen manager with VT100/ANSI terminal emulation). To install it:

sudo yum install screen -y

Run Prowler in a screen session:

screen -dmS prowler sh -c "./prowler -M html"

Check existing running screen sessions:

screen -ls

Attach to the Prowler session:

screen -r prowler

Use ‘Ctrl+a d’ to detach without terminating.

If you want to run Prowler from CloudShell against multiple accounts, first declare a variable with all account you want to assess:

export AWS_ACCOUNTS='1111111 222222 333333'

Then, make sure you have a role to assume on each of those accounts. See this template (create_role_to_assume_cfn.yaml) that may help, then run this command:

for accountId in $AWS_ACCOUNTS; do screen -dmS prowler sh -c "./prowler -A $accountId -R ProwlerExecRole -M csv,json,html"; done

For more options and details go to: https://github.com/toniblyx/prowler or run ./prowler -h.

Prowler 2.0: New release with improvements and new checks ready for re:Invent and BlackHat EU

Taking advantage of this week AWS re:Invent and next week BlackHat Europe, I wanted to push forward a new version of Prowler.

In case you are new to Prowler:

Prowler is an AWS Security Best Practices Assessment, Auditing, Hardening and Forensics Readiness Tool. It follows guidelines of the CIS Amazon Web Services Foundations Benchmark and DOZENS of additional checks including GDPR and HIPAA groups. Official CIS benchmark for AWS guide is here.

This new version has more than 20 new extra checks (of +90), including GDPR and HIPAA group of checks as for a reference to help organizations to check the status of their infrastructure regarding those regulations. Prowler has also been refactored to allow easier extensibility. Another important feature is the JSON output that allows Prowler to be integrated, for example, with Splunk or Wazuh (more about that soon!). For all details about what is new, fixes and improvements please see the release notes here: https://github.com/toniblyx/prowler/releases/tag/2.0

For me, personally, there are two main benefits of Prowler. First of all, it helps many organizations and individuals around the world to improve their security posture on AWS, and using just one easy and simple command, they realize what do they have to do and how to get started with their hardening. Second, I’m learning a lot about AWS, its API, features, limitations, differences between services and AWS security in general.

Said that, I’m so happy to present Prowler 2.0 in BlackHat Europe next week in London! It will be at the Arsenal

and I’ll talk about AWS security, and show all new features, how it works, how to take advantage of all checks and output methods and some other cool things. If you are around please come by and say hello, I’ve got a bunch of laptop sticklers! Here all details, Location: Business Hall, Arsenal Station 2. Date: Wednesday, December 5 | 3:15pm-4:50pm. Track: Vulnerability Assessment. Session Type: Arsenal

BIG THANKS!

I want to thank the Open Source community that has helped out since first day, almost a thousand stars in Github and more than 500 commits talk by itself. Prowler has become pretty popular out there and all the community support is awesome, it motivates me to keep up with improvements and features. Thanks to you all!!

Prowler future?

Main goals for future versions are: to improve speed and reporting, including switch base code to Python to support existing checks and new ones in any language.

If you are interested on helping out, don’t hesitate to reach out to me. \m/

My arsenal of AWS security tools

I’ve been using and collecting a list of helpful tools for AWS security. This list is about the ones that I have tried at least once and I think they are good to look at for your own benefit and most important: to make your AWS cloud environment more secure.

They are not in any specific order, I just wanted to group them somehow. I have my favorites depending on the requirements but you can also have yours once you test them.

New additions at https://github.com/toniblyx/my-arsenal-of-aws-security-tools

Defensive (Hardening, Security Assessment, Inventory)

- Scout2: https://github.com/nccgroup/Scout2 – Security auditing tool for AWS environments (Python)

- Prowler: https://github.com/toniblyx/prowler – CIS benchmarks and additional checks for security best practices in AWS (Shell Script)

- Scans: https://github.com/cloudsploit/scans – AWS security scanning checks (NodeJS)

- CloudMapper: https://github.com/duo-labs/cloudmapper – helps you analyze your AWS environments (Python)

- CloudTracker: https://github.com/duo-labs/cloudtracker – helps you find over-privileged IAM users and roles by comparing CloudTrail logs with current IAM policies (Python)

- AWS Security Benchmarks: https://github.com/awslabs/aws-security-benchmark – scrips and templates guidance related to the AWS CIS Foundation framework (Python)

- AWS Public IPs: https://github.com/arkadiyt/aws_public_ips – Fetch all public IP addresses tied to your AWS account. Works with IPv4/IPv6, Classic/VPC networking, and across all AWS services (Ruby)

- PMapper: https://github.com/nccgroup/PMapper – Advanced and Automated AWS IAM Evaluation (Python)

- AWS-Inventory: https://github.com/nccgroup/aws-inventory – Make a inventory of all your resources across regions (Python)

- Resource Counter: https://github.com/disruptops/resource-counter – Counts number of resources in categories across regions

Offensive:

- weirdALL: https://github.com/carnal0wnage/weirdAAL – AWS Attack Library

- Pacu: https://github.com/RhinoSecurityLabs/pacu – AWS penetration testing toolkit

- Cred Scanner: https://github.com/disruptops/cred_scanner

- AWS PWN: https://github.com/dagrz/aws_pwn

- Cloudfrunt: https://github.com/MindPointGroup/cloudfrunt

- Cloudjack: https://github.com/prevade/cloudjack

- Nimbostratus: https://github.com/andresriancho/nimbostratus

Continuous Security Auditing:

- Security Monkey: https://github.com/Netflix/security_monkey

- Krampus (as Security Monkey complement) https://github.com/sendgrid/krampus

- Cloud Inquisitor: https://github.com/RiotGames/cloud-inquisitor

- CloudCustodian: https://github.com/capitalone/cloud-custodian

- Disable keys after X days: https://github.com/te-papa/aws-key-disabler

- Repokid Least Privilege: https://github.com/Netflix/repokid

- Wazuh CloudTrail module: https://documentation.wazuh.com/current/amazon/index.html

DFIR:

- AWS IR: https://github.com/ThreatResponse/aws_ir – AWS specific Incident Response and Forensics Tool

- Margaritashotgun: https://github.com/ThreatResponse/margaritashotgun – Linux memory remote acquisition tool

- LiMEaide: https://kd8bny.github.io/LiMEaide/ – Linux memory remote acquisition tool

- Diffy: https://github.com/Netflix-Skunkworks/diffy – Triage tool used during cloud-centric security incidents

Development Security:

- CFN NAG: https://github.com/stelligent/cfn_nag – CloudFormation security test (Ruby)

- Git-secrets: https://github.com/awslabs/git-secrets

- Repository of sample Custom Rules for AWS Config: https://github.com/awslabs/aws-config-rules

S3 Buckets Auditing:

- https://github.com/Parasimpaticki/sandcastle

- https://github.com/smiegles/mass3

- https://github.com/koenrh/s3enum

- https://github.com/tomdev/teh_s3_bucketeers/

- https://github.com/eth0izzle/bucket-stream

- https://github.com/gwen001/s3-buckets-finder

- https://github.com/aaparmeggiani/s3find

- https://github.com/bbb31/slurp

- https://github.com/random-robbie/slurp

- https://github.com/kromtech/s3-inspector

- https://github.com/petermbenjamin/s3-fuzzer

- https://github.com/jordanpotti/AWSBucketDump

- https://github.com/bear/s3scan

- https://github.com/sa7mon/S3Scanner

- https://github.com/magisterquis/s3finder

- https://github.com/abhn/S3Scan

- https://breachinsider.com/honey-buckets/

- https://www.buckhacker.com [Currently Offline]

- https://www.thebuckhacker.com/

- https://buckets.grayhatwarfare.com/

Training:

Others:

- https://github.com/nagwww/s3-leaks – a list of some biggest leaks recorded